Riptide Platform Architecture

One foundation. Many applications.

Riptide Platform SDK

Overview

The Riptide Platform SDK is an enterprise-grade development framework for .NET 8+ applications that provides a comprehensive suite of foundational services designed to accelerate development while ensuring consistency, reliability, and best practices across your entire application portfolio. It delivers production-ready implementations of cross-cutting concerns that every modern enterprise application requires.

Purpose

Traditional enterprise application development requires teams to repeatedly solve the same fundamental challenges: logging, configuration management, dependency injection, monitoring, multi-tenancy, and testing infrastructure. Riptide Platform SDK solves this by:

- Standardizing Cross-Cutting Concerns: Consistent implementations across all applications

- Accelerating Time-to-Market: Pre-built, tested infrastructure components

- Enforcing Best Practices: Clean architecture patterns built into the framework

- Reducing Technical Debt: Well-documented, maintainable code from day one

- Enabling Enterprise Scale: Multi-tenant, observable, configurable by design

- Simplifying Testing: Comprehensive testing utilities and patterns

Key Capabilities

Production-Ready Infrastructure

- Identity Management: Unified authentication with three deployment modes (Self-Contained, SSO, Application Manager) and capability-based authorization

- Enterprise Logging: Structured logging with correlation tracking, pattern-based masking, and extensible provider support

- Configuration Management: Multi-source configuration with secrets management and value monitoring patterns

- Monitoring Abstraction: Metrics collection with DataDog and OpenTelemetry backend support

- Persistence: Database provider abstraction with EF Core supporting SQLite, PostgreSQL, and SQL Server

- Dependency Injection: Attribute-based service registration with automatic assembly scanning

- Tenant Context Patterns: Foundational abstractions for multi-tenant applications

- Security: Input sanitization, output encoding, and OWASP-aligned protection against XSS, injection, and path traversal

- Documentation: Embeddable markdown documentation viewer with navigation, search, and Mermaid diagrams

- AI Agent Skills: Pre-built skill files and context documents that give AI coding agents deep SDK knowledge for production-ready AI-assisted development

- Testing Patterns: Base classes and examples for unit, integration, and architecture tests

- Cryptographic Licensing: RSA-4096 signature-based license protection with offline validation

Clean Architecture Foundation

- Layered Design: Clear separation between Domain, Application, Infrastructure, and Presentation

- SOLID Principles: Built-in patterns that enforce maintainable, testable code

- Domain-Driven Design: Value objects, entities, and aggregates with validation

- Command Query Separation: Clear patterns for reads vs. writes

- Repository Pattern: Abstracted data access with provider flexibility

Developer Experience

- Minimal Configuration: Convention-over-configuration with sensible defaults

- Fluent APIs: Intuitive, discoverable service configuration

- Rich Documentation: Comprehensive XML documentation and usage examples

- Type Safety: Strong typing throughout with compile-time validation

- IntelliSense Support: Full IDE integration for productivity

- Agentic AI Support: Skill files that enable AI coding assistants to provide accurate, context-aware suggestions based on deep SDK knowledge

Core Components

1. Logging Component

Structured logging framework with Console, File, and DataDog provider support. Includes correlation tracking, pattern-based sensitive data masking, and ASP.NET Core middleware.

Key Features:

- Structured logging with JSON format

- Pattern-based PII masking (email, SSN, credit card formats)

- DataDog provider for sending logs to DataDog Logs API

- External provider framework for Elastic and custom endpoints

- Request/response logging middleware

- Health check integration

2. Configuration Component

Multi-source configuration with Local, Application Manager, Azure Key Vault, and Strongbox providers. Supports file watching and IOptionsMonitor patterns for value reloading.

Key Features:

- Multiple provider support with failover

- Secrets management with Key Vault and Strongbox

- Application Manager integration for centralized configuration

- File watching for configuration value changes

- IOptionsMonitor integration for reactive configuration

- Provider health checks

3. Monitoring Component

Metrics collection abstraction with DataDog and OpenTelemetry provider support. Push metrics to monitoring backends with tenant tagging.

Key Features:

- DataDog and OpenTelemetry providers

- In-memory provider for testing

- Tenant tagging for metrics

- Monitoring attributes (

[TrackMetrics],[MonitorPerformance]) - Health check integration

4. Dependency Injection Component

Attribute-based service registration using [AutoRegister] with automatic assembly scanning and lifecycle management.

Key Features:

[AutoRegister]attribute for declarative registration- Assembly scanning and service discovery

- Multiple interface registration

- Keyed services and service replacement

- Tenant context middleware integration

5. Tenant Isolation Component

Foundational abstractions for multi-tenant applications including domain models, interfaces, and row-level isolation patterns.

Key Features:

- Tenant domain value objects and entities

[RequiresTenant]attribute- ASP.NET Core middleware foundation

- EF Core query filter patterns and examples

- Extensible tenant resolver interfaces

6. Testing Component

Base classes and patterns for testing clean architecture applications with examples for architecture tests, builders, and integration testing.

Key Features:

- Unit and integration test base classes

- Architecture test examples (NetArchTest patterns)

- Builder pattern documentation

- In-memory provider implementations

- Health check testing utilities

- Integration test helpers

- Mock data generation

7. Identity Management Component

Unified authentication framework supporting three deployment models: Self-Contained identity, SSO integration, and Application Manager. Switch between modes with configuration changes—no code modifications required.

Key Features:

- Three identity modes: Self-Contained, Single Sign-On, and Application Manager

- Self-contained mode with BCrypt password hashing and JWT tokens

- SSO integration with Azure Entra ID (extensible to Google, Okta, etc.)

- Application Manager integration with centralized identity brokering and capability-based authorization

- Multi-database support (SQLite, PostgreSQL, SQL Server)

- Configuration-based mode switching

- Declarative capability authorization with custom attributes

- Multi-tenancy support with trial management

- Session management with refresh tokens

- Account lockout and security features

Identity Modes:

Self-Contained: Complete embedded identity system with local user storage, perfect for standalone deployments, edge computing, and air-gapped environments. Ships with default admin user and provides full user management.

Single Sign-On (SSO): OAuth2/OIDC integration with enterprise identity providers. Plugin architecture supports Azure Entra ID out-of-the-box with extensibility for additional providers. Optional group-based access restrictions.

Application Manager: Centralized identity brokering and authorization for multi-application deployments. Federates authentication across enterprise SSO, public IdPs, local passwords, and trial tokens. Provides role-based access control with granular capability definitions, trial management, and automatic tenant provisioning.

8. Licensing Component

Enterprise-grade RSA-4096 cryptographic licensing system for protecting your applications with offline license validation. Generate tamper-proof license tokens without requiring online activation or phone-home functionality.

Key Features:

- RSA-4096 digital signature-based licensing

- Offline license validation (no phone-home required)

- Tamper-proof license tokens with cryptographic verification

- Extensible license model for custom applications

- LicenseGenerator CLI tool for development and production licenses

- Comprehensive key management and security documentation

- InstallationId binding to prevent license sharing

- Support for perpetual and time-limited licenses

9. Security Component

OWASP-aligned input sanitization, output encoding, and protection against common web vulnerabilities including XSS, SQL injection, command injection, and path traversal.

Key Features:

- Input sanitization engine with configurable strictness levels

- HTML encoding, JavaScript encoding, and URL encoding utilities

- SQL injection pattern detection and prevention

- Command injection and path traversal protection

- Configurable allow/deny lists for input validation

- ASP.NET Core middleware integration

- Audit logging of security events

10. Documentation Component

Embeddable documentation viewer that renders markdown files as a browsable microsite with automatic navigation, full-text search, and Mermaid diagram support.

Key Features:

- Markdown rendering with Markdig advanced extensions

- Automatic navigation tree from filesystem or toc.json manifest

- Full-text search with in-memory indexing and highlighted snippets

- Mermaid diagram support for architecture and flow diagrams

- HTMX-powered page transitions without full reloads

- Responsive layout with collapsible sidebar

- Application Manager integration for documentation portal architecture

11. AI Agent Skills

Pre-built skill files and context documents that give AI coding agents—Claude, GitHub Copilot, and others—deep knowledge of the Riptide Platform SDK. Instead of discovering APIs through trial and error, AI assistants start every session with the correct middleware pipeline order, component registration sequences, interface references, and architecture patterns.

Key Features:

- Claude Code skill (SKILL.md) with critical rules, interface quick reference, and namespace mappings

- Complete component API reference covering registration, middleware, and usage for all SDK components

- Configuration reference with full appsettings.json structure and per-component options

- Annotated code examples for common integration scenarios

- AI-AGENT-CONTEXT.md quick-start document for non-specific agents and IDE assistants

- Zero runtime overhead—skill files ship alongside the SDK and are consumed only by AI tooling

Integration Points

ASP.NET Core Integration

- Middleware for logging, monitoring, and tenant resolution

- Health check endpoints with detailed diagnostics

- Service registration extensions

- Configuration binding

- Request/response pipeline integration

API Integration

- RESTful service patterns

- OpenAPI/Swagger documentation

- Versioned API support

- CORS configuration

- Rate limiting and throttling

Database Integration

- Repository pattern implementations

- Entity Framework Core integration

- Multi-tenant data isolation

- Migration management

- Connection resilience

Cloud Platform Support

- Azure App Configuration integration

- Azure Key Vault for secrets

- Azure Application Insights monitoring

- Container orchestration (Kubernetes, Docker)

- CI/CD pipeline templates

Common Use Cases

Microservices Development

Build consistent microservices with standardized logging, monitoring, and configuration across your service mesh. Tenant context automatically propagates through service calls, and distributed tracing provides end-to-end visibility.

Multi-Tenant SaaS Applications

Develop SaaS applications with built-in tenant isolation, tenant-aware configuration, and per-tenant monitoring. Scale from single-tenant to enterprise multi-tenant deployments without code changes.

Enterprise Application Modernization

Migrate legacy applications to modern .NET with a proven framework that provides enterprise-grade infrastructure. Standardize across your application portfolio while maintaining consistency.

Rapid Prototyping and MVP Development

Accelerate time-to-market by focusing on business logic while the SDK handles infrastructure concerns. Production-ready from day one with built-in observability and configurability.

High-Compliance Environments

Support regulatory programs (SOC 2, HIPAA, GDPR) with audit logging hooks, data masking helpers, tenant isolation, and extensible monitoring. Each component provides building blocks you can tailor to your policies.

Technical Specifications

- Platform: .NET 8+ with C# 12+

- Architecture: Clean Architecture with Domain-Driven Design

- Testing: xUnit with FluentAssertions and Moq

- Documentation: Comprehensive XML documentation with full IntelliSense support

- Dependencies: Minimal external dependencies, extensible providers

- Packaging: NuGet packages for modular adoption

- Support: Enterprise support options available

Why Riptide Platform SDK

Business Value

- Faster Development: Pre-built infrastructure components eliminate months of foundational work

- Reduced Technical Debt: Clean architecture patterns prevent common pitfalls

- Lower Maintenance Costs: Well-tested, documented code requires less ongoing effort

- Faster Onboarding: New developers productive immediately with familiar patterns

- Risk Mitigation: Battle-tested components reduce production incidents

Technical Excellence

- Full IntelliSense Support: Complete XML documentation enables rich IDE integration

- Comprehensive Test Coverage: Unit, integration, and architecture tests

- Clean Architecture: SOLID principles throughout

- Performance Optimized: Efficient implementations with minimal overhead

- Extensibility: Provider pattern allows customization without forking

Enterprise Ready

- Multi-Tenant Native: Built for SaaS from the ground up

- Observable: Comprehensive logging and monitoring

- Secure: Built-in secrets management and data masking

- Scalable: Designed for high-throughput scenarios

- Compliance-Friendly: Audit trail and retention building blocks ready for extension

Support & Resources

- Active Development: Regular updates and improvements

- Comprehensive Documentation: API docs, guides, and examples

- Sample Applications: Real-world reference implementations

- Best Practices: Proven patterns and anti-pattern guidance

- Enterprise Support: Professional support options available

Getting Started

Quick Start

// Program.cs

var builder = WebApplication.CreateBuilder(args);

// Add Riptide Platform services

builder.Services

.AddRiptideIdentity(builder.Configuration)

.AddRiptideLogging(options => options.UseConsole().UseFile())

.AddRiptideConfiguration(options => options.UseLocalDevelopment())

.AddRiptideMonitoring(options => options.UseDataDog())

.AddRiptideDependencyInjection()

.AddRiptideTenantIsolation();

var app = builder.Build();

// Add Riptide middleware

app.UseAuthentication()

.UseAuthorization()

.UseRiptideLogging()

.UseRiptideTenantResolution()

.UseRiptideMonitoring();

app.Run();

Installation

For the simplest onboarding, install the unified SDK meta-package:

dotnet add package Riptide.Platform.SDK

If you prefer to adopt components one at a time, install the Bootstrap packages individually:

dotnet add package Riptide.Platform.Identity.Bootstrap

dotnet add package Riptide.Platform.Logging.Bootstrap

dotnet add package Riptide.Platform.Configuration.Bootstrap

dotnet add package Riptide.Platform.Monitoring.Bootstrap

dotnet add package Riptide.Platform.Persistence.Bootstrap

dotnet add package Riptide.Platform.DependencyInjection.Bootstrap

dotnet add package Riptide.Platform.TenantIsolation.Bootstrap

dotnet add package Riptide.Platform.Security.Bootstrap

dotnet add package Riptide.Platform.Documentation.Bootstrap

dotnet add package Riptide.Platform.Testing.Bootstrap

# AI Agent Skills are included automatically—no package install required

dotnet add package Riptide.Platform.Licensing

Identity Management Setup

Configure your application's identity mode based on deployment requirements:

// Self-Contained Mode (appsettings.json)

{

"Identity": {

"Mode": "SelfContained",

"SelfContained": {

"DatabaseProvider": "SQLite",

"ConnectionString": "Data Source=identity.db",

"JwtSecretKey": "#{JWT_SECRET_KEY}#your-secret-key-min-32-chars",

"CreateDefaultAdmin": true

}

}

}

// SSO Mode (appsettings.json)

{

"Identity": {

"Mode": "SingleSignOn",

"Sso": {

"Provider": "AzureEntraId",

"ClientId": "#{SSO_CLIENT_ID}#your-client-id",

"ClientSecret": "#{SSO_CLIENT_SECRET}#your-client-secret",

"TenantId": "#{SSO_TENANT_ID}#your-tenant-id"

}

}

}

// Application Manager Mode with Capabilities (appsettings.json)

{

"Identity": {

"Mode": "ApplicationManager",

"ApplicationManager": {

"ServerUrl": "https://appmanager.riptide.solutions",

"ApplicationName": "myapp",

"ApiKey": "#{APP_MANAGER_API_KEY}#your-api-key",

"Capabilities": [

{

"Code": "myapp:read",

"Name": "View Data",

"Description": "Can view application data"

},

{

"Code": "myapp:admin",

"Name": "Administrator",

"Description": "Full administrative access"

}

]

}

}

}

Use declarative capability-based authorization in your controllers:

[ApiController]

[Route("api/data")]

public class DataController : ControllerBase

{

[HttpGet]

[RequireCapability("myapp:read")]

public async Task<IActionResult> GetData()

{

// Capability checked automatically by attribute

return Ok(data);

}

[HttpPost]

[RequireCapability("myapp:create", "myapp:admin")]

public async Task<IActionResult> CreateData([FromBody] DataDto data)

{

// User must have either capability

return Ok(result);

}

}

Licensing Your Application

Protect your application with the SDK's cryptographic licensing system:

// Add licensing to your application

builder.Services.AddRiptideLicensing(options =>

{

options.Token = builder.Configuration["Riptide:Platform:License:Token"];

options.InstallationId = builder.Configuration["Riptide:Platform:License:InstallationId"];

});

// For development, use the LicenseGenerator tool:

// cd tools/LicenseGenerator && dotnet run

See the Licensing Component Documentation for detailed information about the cryptographic licensing system, key management, and the LicenseGenerator tool.

Architecture Validation

The SDK includes built-in architecture tests to ensure your application maintains clean architecture principles:

- Layer dependency validation

- Naming convention enforcement

- Test coverage requirements

- Documentation completeness checks

Performance Characteristics

- Designed for Low Overhead: Middleware is tuned to stay lightweight—benchmark in your own environment before going live.

- Logging Pipeline: Asynchronous batching defaults help balance throughput and latency; adjust policies to fit your workload.

- Configuration & Metrics Caching: Reduces redundant lookups while remaining responsive to change notifications.

- Memory Awareness: Hot paths minimize allocations for typical web workloads; validate with your production load tests.

- Scale Guidance: Internal stress tests cover high-throughput scenarios, but plan full performance runs prior to production.

Compliance & Security

- Audit Trail Hooks: Structured logging and change tracking primitives to plug into your compliance workflows.

- Sensitive Data Guardrails: Masking utilities and encryption extension points to align with HIPAA or similar policies.

- Tenant Controls: Isolation patterns and deletion hooks that support GDPR-style data requests.

- Security Foundations: Secrets management helpers and least-privilege defaults that you can extend for your environment.

- Ongoing Updates: Regular dependency updates and vulnerability scanning guidance; review against your internal standards.

Support & Resources

- Documentation: docs/

- Sample Applications: samples/

- API Reference: docs/api/

- Enterprise Support: Contact for professional support options

Riptide Platform SDK - Building enterprise applications the right way, from day one.

SDK Identity Component

Overview

The Riptide SDK Identity Component is an enterprise-grade authentication and authorization framework for .NET 8+ applications that provides secure user management, role-based access control, token-based authentication, and seamless integration with Riptide Application Manager. It enables development teams to implement consistent security across all Riptide platform applications while maintaining flexibility for custom authorization requirements.

Purpose

Modern applications require sophisticated identity management that supports secure authentication, fine-grained authorization, and integration with centralized identity providers. Traditional authentication approaches often result in inconsistent security implementations, duplicated user management logic, and complex integration challenges. Riptide Identity solves this by:

- Centralizing Authentication: Integration with Riptide Application Manager for unified user management

- Standardizing Authorization: Role-based and claims-based access control patterns

- Securing API Access: Token-based authentication with automatic validation

- Supporting Multi-Tenancy: Tenant-aware authentication and authorization

- Enabling SSO: Single sign-on across Riptide platform applications

- Simplifying Integration: Consistent authentication across all services

- Providing Audit Trails: Track authentication events and authorization decisions

Key Capabilities

Authentication Management

- Token-Based Authentication: Support for JWT tokens with automatic validation

- Application Manager Integration: Seamless connection to Riptide Application Manager for user authentication

- Session Management: Secure session handling with configurable expiration

- Password Policies: Configurable password strength and rotation requirements

- Multi-Factor Support: Integration points for MFA providers

- API Key Authentication: Support for service-to-service authentication

Authorization Framework

- Role-Based Access Control: Assign users to roles with specific permissions

- Claims-Based Authorization: Fine-grained permissions based on user claims

- Policy-Based Authorization: Define custom authorization policies

- Resource-Based Authorization: Context-aware permission checks for specific resources

- Tenant Isolation: Automatic enforcement of tenant boundaries

- Permission Caching: Performance-optimized permission lookups

Token Management

- Token Generation: Create authentication tokens with configurable claims

- Token Validation: Automatic validation of token signatures and expiration

- Token Refresh: Support for refresh tokens to maintain sessions

- Token Revocation: Immediate invalidation of compromised tokens

- Scope Management: Define and enforce token scopes for API access

- Audience Validation: Ensure tokens are used for intended services

User Context

- Current User Access: Retrieve authenticated user information throughout request pipeline

- Tenant Context: Automatic tenant identification from authentication context

- Role Membership: Query user roles and permissions

- Claims Access: Read user claims for authorization decisions

- Impersonation Support: Admin users can impersonate other users for support scenarios

- Anonymous Handling: Graceful handling of unauthenticated requests

Integration with Application Manager

- User Synchronization: Keep local user cache synchronized with Application Manager

- Centralized Management: Delegate user administration to Application Manager

- Credential Validation: Validate credentials against Application Manager

- Profile Updates: Propagate user profile changes from Application Manager

- Group Membership: Sync group and role assignments

- Password Resets: Integrate with Application Manager password reset flows

Security Features

- HTTPS Enforcement: Ensure all identity operations use secure connections

- CORS Configuration: Control cross-origin authentication requests

- Rate Limiting: Protect against brute force authentication attempts

- Audit Logging: Track all authentication and authorization events

- Token Encryption: Encrypt sensitive token contents

- Secure Cookie Handling: HttpOnly and Secure flags for authentication cookies

Integration Points

ASP.NET Core

// Startup configuration

builder.Services.AddRiptideIdentity(options =>

{

options.UseApplicationManager(config =>

{

config.Endpoint = "https://appmanager.example.com";

config.ClientId = "your-application-id";

config.ClientSecret = configuration["Identity:ClientSecret"];

});

options.EnableTokenValidation()

.EnableRoleBasedAuthorization()

.EnableAuditLogging();

});

// Middleware

app.UseAuthentication();

app.UseAuthorization();

Authorization Policies

// Define custom policies

builder.Services.AddAuthorization(options =>

{

options.AddPolicy("RequireAdmin", policy =>

policy.RequireRole("Admin"));

options.AddPolicy("RequireManager", policy =>

policy.RequireClaim("permission", "manage"));

});

// Use in controllers

[Authorize(Policy = "RequireAdmin")]

public class AdminController : ControllerBase

{

// Admin-only endpoints

}

Accessing User Context

// Inject user context service

public class MyService

{

private readonly IUserContext _userContext;

public MyService(IUserContext userContext)

{

_userContext = userContext;

}

public async Task ProcessRequest()

{

var userId = _userContext.UserId;

var tenantId = _userContext.TenantId;

var roles = _userContext.Roles;

if (_userContext.IsInRole("Admin"))

{

// Admin-specific logic

}

}

}

Common Use Cases

Application Authentication

Integrate Riptide Identity component to authenticate users across platform applications. Users log in once through Application Manager and receive tokens that work across all services. The component validates tokens, extracts user information, and makes it available throughout the request pipeline.

API Authorization

Protect API endpoints with role-based or policy-based authorization. Define authorization requirements at the controller or action level. The component automatically enforces authorization rules and returns appropriate HTTP status codes for unauthorized requests.

Multi-Tenant Security

Automatically enforce tenant isolation by extracting tenant context from authentication tokens. The component ensures users can only access resources within their tenant, preventing cross-tenant data leakage.

Service-to-Service Authentication

Enable secure communication between platform services using API keys or service tokens. The component validates service credentials and establishes service identity for authorization decisions.

Administrative Functions

Support administrative scenarios where support staff need to impersonate users to diagnose issues. The component tracks impersonation sessions and maintains audit trails for compliance.

Single Sign-On

Enable seamless single sign-on across multiple Riptide platform applications. Users authenticate once through Application Manager and gain access to all authorized applications without additional logins.

Configuration

Application Manager Connection

{

"RiptideIdentity": {

"ApplicationManager": {

"Endpoint": "https://appmanager.example.com",

"ClientId": "workflow-engine",

"ClientSecret": "stored-in-key-vault",

"RequireHttps": true,

"TokenExpiration": "1h",

"RefreshTokenExpiration": "7d"

}

}

}

Authorization Configuration

{

"RiptideIdentity": {

"Authorization": {

"EnableRoleBasedAccess": true,

"EnableClaimsBasedAccess": true,

"CachePermissions": true,

"CacheDuration": "5m",

"EnforceTenantIsolation": true

}

}

}

Security Settings

{

"RiptideIdentity": {

"Security": {

"RequireHttps": true,

"EnableAuditLogging": true,

"RateLimitPerMinute": 60,

"EnableImpersonation": false,

"AllowAnonymous": false

}

}

}

Best Practices

Token Security

- Store client secrets in secure configuration providers (Azure Key Vault, environment variables)

- Use HTTPS for all identity operations

- Set appropriate token expiration times based on security requirements

- Implement token refresh flows to maintain user sessions securely

- Revoke tokens immediately upon user logout or security events

Authorization Design

- Use policy-based authorization for complex permission logic

- Cache authorization decisions for performance

- Implement resource-based authorization for fine-grained access control

- Document authorization requirements for each endpoint

- Regularly review and audit permission assignments

Multi-Tenant Considerations

- Always validate tenant context in authorization logic

- Enforce tenant isolation at the data access layer

- Log tenant context with all operations for audit trails

- Test cross-tenant access prevention thoroughly

- Monitor for attempted tenant boundary violations

Performance Optimization

- Enable permission caching to reduce Application Manager calls

- Use local token validation when possible

- Implement appropriate cache expiration times

- Monitor authentication performance metrics

- Scale Application Manager appropriately for load

Error Handling

Common Scenarios

- 401 Unauthorized: Token missing, invalid, or expired

- 403 Forbidden: User authenticated but lacks required permissions

- Connection Failures: Application Manager unavailable or network issues

- Token Validation Errors: Signature mismatch or malformed tokens

- Configuration Errors: Missing or invalid Application Manager settings

Graceful Degradation

- Cache recent authentication results for brief Application Manager outages

- Provide clear error messages for authentication failures

- Log detailed error information for troubleshooting

- Implement retry logic for transient failures

- Alert operations team for prolonged Application Manager unavailability

Monitoring & Observability

Key Metrics

- Authentication Success Rate: Track successful vs failed authentication attempts

- Token Validation Performance: Measure token validation latency

- Authorization Decision Time: Monitor authorization check performance

- Application Manager Response Time: Track Application Manager API performance

- Failed Authorization Attempts: Detect potential security issues

Health Checks

- Application Manager Connectivity: Verify connection to Application Manager

- Token Validation: Test token signing key availability

- Configuration Validity: Validate Identity settings at startup

- Cache Health: Monitor permission cache performance

- Authentication Pipeline: Verify middleware configuration

Security Considerations

Token Protection

- Never log authentication tokens in plain text

- Encrypt tokens in transit and at rest

- Implement token rotation policies

- Monitor for token replay attempts

- Use short-lived tokens with refresh mechanisms

Authorization Security

- Implement defense in depth with multiple authorization layers

- Validate authorization at both API gateway and service levels

- Log all authorization decisions for audit

- Regularly review and update role definitions

- Test authorization rules thoroughly

Compliance Support

- Maintain comprehensive audit logs of authentication events

- Document authorization rules for compliance reviews

- Support user access reviews and permission audits

- Enable administrator accountability through audit trails

- Provide compliance reports for authentication activity

Related Components

- Configuration Component: Secure storage of Application Manager credentials

- Logging Component: Audit trails for authentication events

- Tenant Isolation Component: Multi-tenant security enforcement

- Monitoring Component: Track authentication performance metrics

SDK Configuration Component

Overview

The Riptide SDK Configuration Component is an enterprise-grade configuration management framework for .NET 8.0 applications that provides secure, multi-source configuration with hot-reload capabilities, secrets management, tenant-aware settings, and compliance-grade auditing. It enables development teams to manage application configuration across environments while maintaining security, flexibility, and operational efficiency.

Purpose

Modern applications require sophisticated configuration management that supports local development, staging environments, and production deployments with different security requirements. Traditional configuration approaches often result in hardcoded values, insecure secrets management, inflexible environment handling, and deployment delays for configuration changes. Riptide Configuration solves this by:

- Unifying Multiple Sources: Local files, Azure Key Vault, Value Manager, and custom providers

- Securing Secrets: Encrypted storage and retrieval of sensitive configuration

- Enabling Value Reload: Monitor and reload configuration values using file watchers and IOptionsMonitor

- Supporting Configuration Patterns: Abstractions for tenant-aware configuration scenarios

- Providing Failover: Automatic provider fallback for high availability

- Simplifying Development: Seamless transition from local to cloud configuration

- Capturing Audit Trails: Persistent access/change history with retention controls, summarized reports, and one-click manual purge for compliance teams

Key Capabilities

Multi-Source Configuration

- Local Development: File-based configuration (appsettings.json, appsettings.{Environment}.json)

- Azure Key Vault: Cloud-based secrets management with Azure identity integration

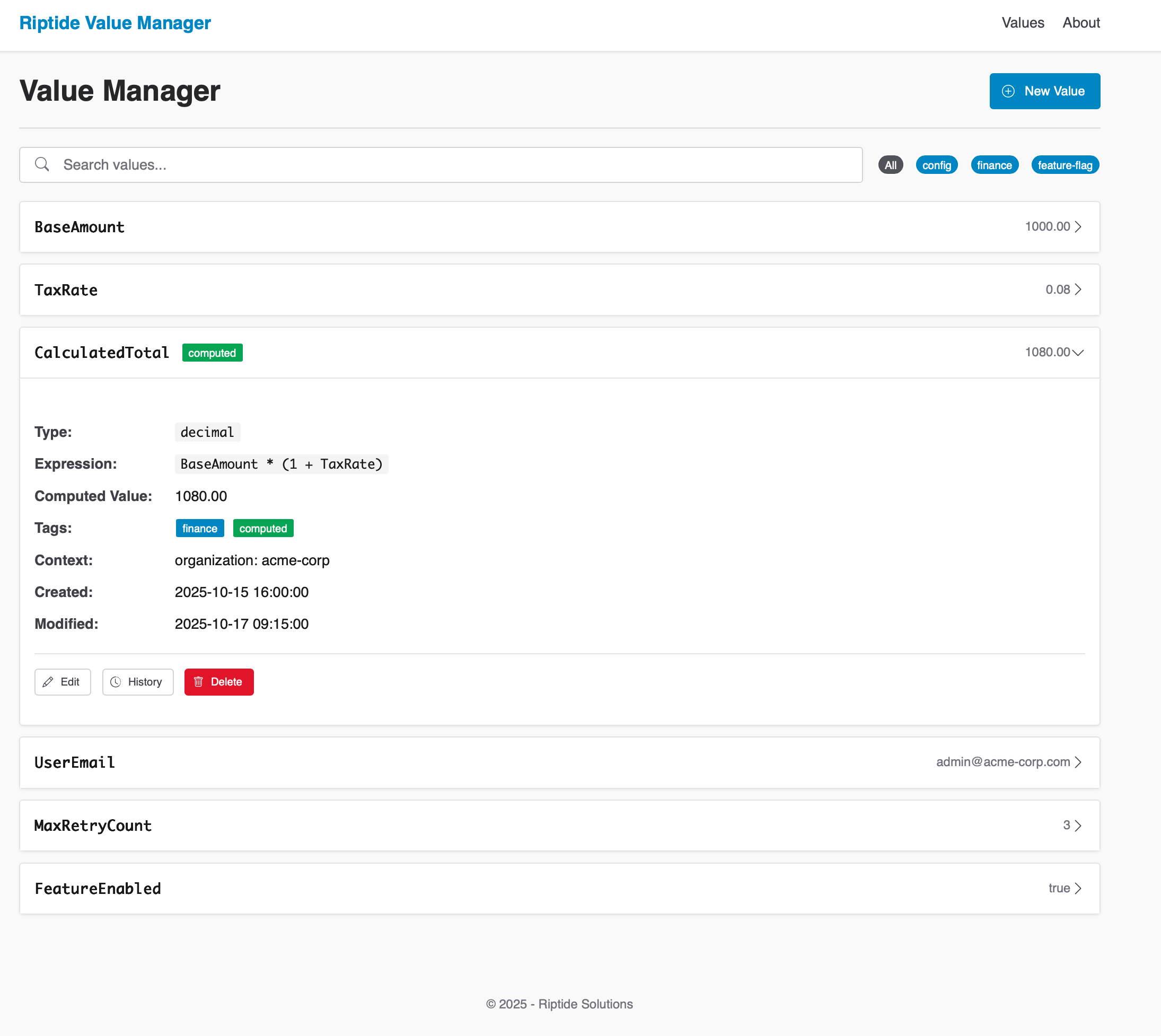

- Value Manager: Business-user-friendly configuration management with authorized updates

- Custom Providers: Extensible architecture for adding new configuration sources

- Provider Hierarchy: Configurable priority order with automatic failover

Secrets Management

- Provider Encryption: Leverage backing stores like Azure Key Vault or Strongbox to handle encryption at rest and in transit

- Controlled Retrieval: Integrate provider SDKs to load secrets into process memory only when needed

- Access Control: Delegate authorization to provider-level roles, identities, and policies

- Rotation Support: Refresh secrets or connection strings without redeploying by updating the underlying provider

- Audit Trail Integration: Built-in audit pipeline persists access/change events with configurable retention and redaction

Configuration Value Monitoring

- File Watching: Monitor configuration files for changes using file system watchers

- IOptionsMonitor Integration: Leverage .NET's IOptionsMonitor for reactive configuration

- Change Detection: Detect when configuration values change on disk or in providers

- Application Response: Application code can respond to configuration changes via IOptionsMonitor

Important Limitation: Not all configuration can be hot-reloaded. Dependency injection registrations, middleware pipeline, and some infrastructure components require application restart. Use IOptionsMonitor pattern for application settings that can change at runtime.

Audit Trails & Compliance

- Change History: Automatic write/delete tracking with before/after previews (redacted by default)

- Access Visibility: Optional read logging shows which services requested configuration and why

- Retention Policies: Configure retention windows per environment (e.g., 90 days dev, 7 years regulated)

- Manual Purge: Invoke compliance-grade purge endpoints to delete historic records immediately without waiting for scheduled sweeps

- Summarized Reporting: Built-in reporting service projects change summaries for dashboards or regulator exports in seconds

- Narrow-Scan Queries: Optimized lookups reduce provider scans to the exact day and path hashes involved, keeping investigations fast even at scale

- Persistent Store: Entries are stored under the

audit/namespace in your configured providers for long-term durability

Tenant-Aware Configuration Patterns

- Configuration Abstraction: Interfaces for tenant-scoped configuration retrieval

- Provider Extensibility: Build tenant-aware configuration providers

- Pattern Examples: Sample implementations for multi-tenant configuration scenarios

Note: This component provides abstractions and patterns for tenant-aware configuration. Implementation details depend on your multi-tenancy architecture.

Provider Orchestration

- Priority-Based Selection: Configure provider precedence

- Automatic Failover: Switch to backup provider on failure

- Provider Health Checks: Monitor configuration source availability

- Caching Strategy: In-memory caching with configurable TTL

- Bulk Retrieval: Fetch multiple configuration values in single call

Integration Points

ASP.NET Core

// Startup configuration

builder.Services.AddRiptideConfiguration(options =>

{

// Local development

options.UseLocalDevelopment(config =>

{

config.BaseDirectory = builder.Environment.ContentRootPath;

config.EnvironmentName = builder.Environment.EnvironmentName;

});

// Azure Key Vault for production

options.UseAzureKeyVault(config =>

{

config.VaultUri = new Uri(builder.Configuration["Azure:KeyVault:Uri"]);

config.UseManagedIdentity = true;

});

// Enable hot-reload

options.EnableHotReload = true;

options.CacheExpirationMinutes = 5;

});

Dependency Injection

public class OrderService

{

private readonly IConfigurationRepository _config;

public OrderService(IConfigurationRepository config)

{

_config = config;

}

public async Task<decimal> GetMinimumOrderAmount()

{

var value = await _config.GetConfigurationValueAsync(

"Orders:MinimumAmount"

);

return decimal.Parse(value.Value);

}

}

Strongly-Typed Configuration

public class OrderSettings

{

public decimal MinimumAmount { get; set; }

public int MaxItemsPerOrder { get; set; }

public bool EnableDiscounts { get; set; }

}

// Retrieve and bind

var settings = await _config.GetConfigurationSectionAsync<OrderSettings>(

"Orders"

);

Common Use Cases

Multi-Environment Deployment

Manage configuration across development, staging, and production environments without code changes. Use local files in development, Azure Key Vault in staging, and Value Manager in production with the same application code.

Feature Flags

Control feature availability through configuration. Enable/disable features per tenant or environment without redeployment. Use hot-reload to toggle features in real-time based on business needs.

Connection String Management

Store database connection strings, API keys, and service endpoints securely. Use Azure Key Vault or Value Manager to manage secrets, with automatic rotation and access control.

Tenant-Specific Settings

Manage configuration for thousands of tenants efficiently. Store tenant-specific thresholds, feature enablement, branding settings, and integration credentials with isolation guarantees.

Compliance Configuration

Maintain configuration for compliance requirements (data retention policies, encryption settings, audit requirements). Track changes through audit logs and enforce validation rules.

Technical Specifications

Supported Providers

- LocalDevelopment: File-based configuration for development

- AzureKeyVault: Azure-native secrets management

- ValueManager: Business-user-friendly configuration management with authorized updates

- Custom: Implement

IConfigurationProviderinterface

Configuration Value Types

- String: Simple text values

- Numeric: Integers, decimals, floating-point

- Boolean: True/false flags

- JSON: Complex objects and arrays

- DateTime: Timestamps and dates

- Encrypted: Sensitive data with automatic decryption

Performance

- Cache First: Prioritizes in-memory caches to avoid repeated remote lookups

- Provider Aware: End-to-end latency depends on the backing store—monitor provider SLAs and network conditions

- Incremental Reloads: Hot-reload refreshes only the values that change instead of forcing application restarts

- Batch-Friendly: APIs expose bulk retrieval patterns so you can reduce chattiness when fetching related settings

- Lean Footprint: Core abstractions avoid unnecessary allocations; profile under your expected workload

Why Riptide Configuration Component

Business Value

- Reduced Deployment Time: Adjust configuration without shipping new binaries

- Security Support: Integrate with provider-level encryption and access policies

- Lower Operational Costs: Fewer redeployments when values change

- Faster Incident Response: Override settings quickly during an incident

- Compliance Building Blocks: Emit events and leverage provider logs to satisfy audit requirements

Technical Excellence

- Clean Architecture: Clear separation across layers

- Provider Pattern: Extensible for custom sources

- Well Tested: Comprehensive unit and integration tests

- Type Safety: Strong typing with validation

- Zero Downtime: Hot-reload without restart

Enterprise Ready

- Tenant Awareness: Patterns for building tenant-scoped configuration flows

- High Availability: Configure provider failover or fallback sources

- Operational Playbooks: Guidance and samples for environment promotion

- Comprehensive Documentation: XML docs and guides

- Enterprise Support: Professional support available

Configuration Options

Local Development Provider

options.UseLocalDevelopment(config =>

{

config.BaseDirectory = "/app/config";

config.EnvironmentName = "Development";

config.FileNames = new[]

{

"appsettings.json",

"appsettings.{Environment}.json"

};

config.ReloadOnChange = true;

config.Optional = false;

});

Azure Key Vault Provider

options.UseAzureKeyVault(config =>

{

config.VaultUri = new Uri("https://myvault.vault.azure.net/");

config.UseManagedIdentity = true;

config.TenantId = "your-tenant-id";

config.ClientId = "your-client-id";

config.ReloadInterval = TimeSpan.FromMinutes(5);

config.CacheSecrets = true;

});

Value Manager Provider

options.UseValueManager(config =>

{

config.ApiUrl = "https://valuemanager.mycompany.com/api";

config.ApiKey = "your-api-key";

config.ApplicationName = "myapp";

config.Environment = "production";

config.RetryCount = 3;

config.TimeoutSeconds = 30;

});

Caching and Hot-Reload

options.EnableHotReload = true;

options.CacheExpirationMinutes = 5;

options.ReloadOnChange = true;

options.ValidateOnReload = true;

// Subscribe to configuration changes

_config.OnConfigurationChanged += async (sender, args) =>

{

Console.WriteLine($"Configuration changed: {args.Key}");

await RefreshDependentServicesAsync();

};

Best Practices

Do's

- ✅ Use environment variables for provider credentials

- ✅ Enable hot-reload for feature flags and thresholds

- ✅ Store secrets in Azure Key Vault or Value Manager, not files

- ✅ Use strongly-typed configuration classes

- ✅ Validate configuration at startup

Don'ts

- ❌ Don't store secrets in appsettings.json

- ❌ Don't disable caching in production (performance impact)

- ❌ Don't use hot-reload for structural changes (require restart)

- ❌ Don't forget to handle configuration change events

- ❌ Don't bypass provider hierarchy for direct access

Security Considerations

Secrets Storage

- All secrets encrypted at rest

- Use Azure Key Vault or Value Manager for production

- Never commit secrets to source control

- Rotate secrets regularly

- Use managed identities when available

Access Control

- Limit configuration provider access

- Use role-based access control (RBAC)

- Audit configuration access

- Implement least privilege principle

- Monitor for unauthorized access

Tenant Isolation

- Ensure tenants cannot access other tenant's configuration

- Validate tenant context before retrieval

- Use separate storage per tenant when required

- Log cross-tenant access attempts

- Test isolation boundaries

Troubleshooting

Configuration Not Found

- Verify provider is configured correctly

- Check configuration key spelling (case-sensitive)

- Ensure provider has necessary permissions

- Review provider health check status

- Check cache expiration settings

Hot-Reload Not Working

- Verify

EnableHotReload = truein options - Check provider supports reload (file watcher, polling)

- Ensure configuration change event handlers are registered

- Review reload interval settings

- Check application permissions for file system access

Performance Issues

- Enable caching with appropriate TTL

- Use batch operations for multiple values

- Reduce reload check frequency

- Consider provider latency (network calls)

- Profile configuration access patterns

Migration Guide

From Microsoft.Extensions.Configuration

Riptide Configuration extends Microsoft.Extensions.Configuration:

- Install Riptide.Platform.Configuration.Bootstrap

- Replace

builder.Configurationwithservices.AddRiptideConfiguration() - Configure providers (local, Azure Key Vault, Value Manager)

- Update code to use

IConfigurationRepository - Enable hot-reload and caching as needed

From Custom Configuration

If migrating from custom configuration solution:

- Identify configuration sources (files, database, APIs)

- Map to Riptide providers or create custom provider

- Migrate configuration keys to new structure

- Update code to use Riptide abstractions

- Test failover and hot-reload functionality

Support & Resources

- API Reference: Configuration API Documentation

- User Guide: Configuration Guide

- Sample Application: Basic Web API Sample

- Specifications: Configuration Specification

Riptide Configuration Component - Secure, flexible configuration management for modern applications.

SDK Logging Component

Overview

The Riptide SDK Logging Component is an enterprise-grade structured logging framework for .NET 8.0 applications that provides comprehensive observability through multiple logging providers, correlation tracking, sensitive data masking, and middleware integration. It enables development teams to gain deep insights into application behavior while ensuring compliance with data privacy regulations.

Purpose

Modern applications require sophisticated logging capabilities to diagnose issues, track user activity, monitor performance, and maintain audit trails. Traditional logging approaches often result in inconsistent implementations, missing correlation context, exposed sensitive data, and poor observability. Riptide Logging solves this by:

- Standardizing Log Structure: Consistent, structured logging across all applications

- Enabling Correlation Tracking: Trace requests across service boundaries with correlation IDs

- Protecting Sensitive Data: Pattern-based masking for common PII formats

- Supporting Multiple Providers: Console, File, and extensible custom providers with external log transformation

- Integrating with ASP.NET Core: Middleware for automatic request/response logging

- Providing Performance Insights: Track slow requests and identify bottlenecks

Key Capabilities

Structured Logging

- Consistent Format: JSON-structured logs with predictable schema

- Contextual Information: Automatic capture of timestamp, log level, category, and message

- Custom Properties: Add domain-specific properties to any log entry

- Correlation IDs: Unique identifiers that track requests across distributed systems

- Tenant Context: Automatic tenant identification in multi-tenant scenarios

Multiple Provider Support

- Console Provider: Development-friendly console output with color coding

- File Provider: Rolling file logging with size and time-based rotation

- DataDog Provider: Send log messages directly to DataDog Logs API

- External Provider Framework: Transform and forward logs to Elastic or custom endpoints

- Custom Providers: Extensible architecture for adding new providers

- Provider Failover: Automatic fallback if primary provider fails

DataDog Integration: Current implementation sends structured log messages to DataDog Logs API. For full APM, distributed tracing, and profiling, integrate DataDog's native .NET tracer alongside this logging provider.

Sensitive Data Protection

- Pattern-Based Masking: Regex-based detection and redaction for known PII formats (emails, SSNs, credit cards)

- Custom Patterns: Define organization-specific sensitive data patterns using regex

- Configurable Rules: Enable/disable masking per environment

- Compliance Support: Documented patterns give you a starting point for GDPR, HIPAA, or SOC 2 reviews

- Performance Conscious: Lightweight pattern matching tuned for common logging scenarios

Note: Masking is based on regex patterns for known formats. Review and extend patterns for your specific compliance needs. True context-aware PII detection requires additional tooling.

Request/Response Logging

- Automatic Middleware: Log all HTTP requests and responses

- Header Capture: Selectively log request/response headers

- Body Logging: Capture request/response bodies (with size limits)

- Performance Tracking: Measure and log request duration

- Error Context: Capture detailed error information on failures

Health Check Integration

- Provider Health: Monitor logging provider availability

- Configuration Validation: Verify logging configuration at startup

- Connectivity Checks: Test provider connectivity

- Degraded Mode: Continue operation if provider unavailable

- Health Endpoints: ASP.NET Core health check integration

Integration Points

ASP.NET Core

// Startup configuration

builder.Services.AddRiptideLogging(options =>

{

options.UseConsole()

.UseFile("/logs")

.UseDataDog(apiKey: configuration["DataDog:ApiKey"])

.EnableSensitiveDataMasking();

});

// Middleware

app.UseRiptideLogging();

Dependency Injection

public class OrderService

{

private readonly ILoggingRepository _logger;

public OrderService(ILoggingRepository logger)

{

_logger = logger;

}

public async Task ProcessOrder(Order order)

{

await _logger.LogInformationAsync(

"Processing order",

new { OrderId = order.Id, Amount = order.TotalAmount }

);

}

}

Manual Logging

// Direct repository usage

var logger = serviceProvider.GetRequiredService<ILoggingRepository>();

await logger.LogInformationAsync("User login successful", new

{

UserId = user.Id,

LoginTime = DateTime.UtcNow,

IpAddress = request.IpAddress

});

Common Use Cases

Distributed Tracing

Track a user request across multiple microservices by propagating correlation IDs. When Service A calls Service B, the correlation ID flows automatically, allowing you to trace the entire request path in your logging dashboard.

Security Audit Trails

Maintain comprehensive audit logs for compliance requirements. Automatically capture who performed what action, when, and from where, while ensuring sensitive data is masked before writing to logs.

Performance Monitoring

Identify slow requests and performance bottlenecks. The middleware automatically logs request duration, and you can set thresholds to trigger warnings for slow operations.

Error Diagnostics

When exceptions occur, capture complete context including request details, tenant information, correlation IDs, and stack traces. This enables rapid root cause analysis during incidents.

Compliance Reporting

Generate compliance reports from structured logs. Filter by tenant, date range, action type, or user to demonstrate regulatory compliance (GDPR data access, HIPAA audit logs, SOC 2 change tracking).

Technical Specifications

Supported Providers

- Console:

ConsoleLoggingProviderwith color-coded output - File:

FileLoggingProviderwith rolling file support - DataDog:

DataDogLoggingProviderwith APM integration - Custom: Implement

ILoggingProviderinterface

Log Levels

Trace: Detailed diagnostic informationDebug: Development debugging informationInformation: General informational messagesWarning: Potential issues that don't prevent operationError: Errors that need attentionCritical: Critical failures requiring immediate action

Performance

- Async First: All logging operations are asynchronous

- Buffer Management: In-memory buffering with overflow handling

- Minimal Allocations: Optimized for low garbage collection pressure

- Efficient Processing: Fast log formatting and provider dispatch

Performance Note: Actual throughput depends on provider implementation, infrastructure sizing, and downstream backends. The file provider prioritizes batch writes; benchmark in your environment before committing to performance targets.

Why Riptide Logging Component

Business Value

- Faster Incident Resolution: Correlation tracking enables request tracing across services

- Compliance Foundation: Pattern-based masking and structured logs help support regulatory reviews (extend patterns to suit your policies)

- Reduced Storage Costs: Structured logs enable efficient storage and querying

- Improved Observability: Gain insights into application behavior and user patterns

- Risk Mitigation: Comprehensive audit trails protect against liability

Technical Excellence

- Clean Architecture: Clear separation of concerns across layers

- Extensible Design: Provider pattern allows custom implementations

- Well Tested: Extensive automated tests across domains, infrastructure, and adapters

- Zero Dependencies: Core abstractions have no external dependencies

- Type Safe: Strong typing with compile-time validation

Enterprise Ready

- Multi-Tenant Support: Automatic tenant context in all logs

- High Performance: Minimal overhead, async operations

- Operationally Ready: Designed with high-volume scenarios in mind—validate with your telemetry targets

- Comprehensive Documentation: XML docs, guides, and examples

- Enterprise Support: Professional support options available

Configuration Options

Provider Configuration

options.UseConsole(config =>

{

config.MinimumLevel = LogLevel.Information;

config.EnableColors = true;

config.TimestampFormat = "yyyy-MM-dd HH:mm:ss.fff";

});

options.UseFile(config =>

{

config.LogDirectory = "/var/logs/myapp";

config.MaxFileSizeBytes = 10_485_760; // 10 MB

config.MaxFileCount = 30; // Keep 30 days

config.RollingInterval = RollingInterval.Daily;

});

options.UseDataDog(config =>

{

config.ApiKey = "your-api-key";

config.ServiceName = "myapp";

config.Environment = "production";

config.BatchSize = 100;

});

Sensitive Data Masking

options.EnableSensitiveDataMasking(config =>

{

config.MaskEmails = true;

config.MaskCreditCards = true;

config.MaskSocialSecurityNumbers = true;

config.CustomPatterns.Add(new Regex(@"CUSTOM-\d{6}"));

config.MaskingCharacter = '*';

});

Request Logging

options.ConfigureRequestLogging(config =>

{

config.LogHeaders = true;

config.LogRequestBody = true;

config.LogResponseBody = false;

config.MaxBodySize = 4096; // 4 KB

config.SlowRequestThreshold = TimeSpan.FromSeconds(5);

config.ExcludedPaths.Add("/health");

config.ExcludedPaths.Add("/metrics");

});

Best Practices

Do's

- ✅ Use structured logging with properties instead of string interpolation

- ✅ Include correlation IDs in all external API calls

- ✅ Log at appropriate levels (don't use Information for debug messages)

- ✅ Use meaningful log categories (typically namespace.classname)

- ✅ Include context properties for filtering and querying

Don'ts

- ❌ Don't log sensitive data without masking (PII, passwords, tokens)

- ❌ Don't log in tight loops without rate limiting

- ❌ Don't use string concatenation for log messages

- ❌ Don't ignore exceptions - always log with full stack trace

- ❌ Don't use synchronous logging in async code paths

Troubleshooting

Logs Not Appearing

- Verify provider is configured correctly

- Check minimum log level configuration

- Ensure provider connectivity (file permissions, network access)

- Review health check endpoint for provider status

Performance Issues

- Reduce log level in production (Information or higher)

- Enable batching for high-volume scenarios

- Exclude health check endpoints from request logging

- Consider async buffering for file provider

Sensitive Data Leaks

- Enable sensitive data masking in all environments

- Add custom patterns for organization-specific sensitive data

- Review logs manually before enabling body logging

- Use data loss prevention (DLP) tools to scan logs

Migration Guide

From Microsoft.Extensions.Logging

Riptide Logging builds on top of Microsoft.Extensions.Logging, so migration is straightforward:

- Install Riptide.Platform.Logging.Bootstrap

- Replace

services.AddLogging()withservices.AddRiptideLogging() - Update

ILogger<T>injections toILoggingRepository - Add structured properties to log calls

- Configure providers and middleware

From Serilog

If migrating from Serilog:

- Remove Serilog packages

- Install Riptide Logging packages

- Update sink configuration to use Riptide providers

- Replace

Log.LoggerwithILoggingRepository - Update structured logging syntax to Riptide conventions

Support & Resources

- API Reference: Logging API Documentation

- Configuration Guide: Configuration Documentation

- Sample Application: Basic Web API Sample

- Architecture: Clean Architecture Blueprint

Riptide Logging Component - Enterprise observability made simple.

SDK Monitoring Component

Overview

The Riptide SDK Monitoring Component is an enterprise-grade observability framework for .NET 8.0 applications that provides real-time metrics collection, monitoring, and alerting with support for multiple backends including DataDog and OpenTelemetry (compatible with Prometheus, Jaeger, and other OTLP backends). It enables development and operations teams to gain deep insights into application health, performance, and user behavior with built-in multi-tenancy and distributed tracing support.

Purpose

Modern distributed applications require comprehensive monitoring to ensure reliability, performance, and business continuity. Traditional monitoring approaches often result in incomplete visibility, vendor lock-in, missing tenant context, and difficult troubleshooting. Riptide Monitoring solves this by:

- Standardizing Metrics Collection: Consistent metrics across all applications and services

- Supporting Multiple Backends: Push metrics to DataDog, OpenTelemetry, and custom providers

- Enabling Tenant Context: Tag metrics with tenant identifiers for filtering

- Providing Metric Submission: Submit metrics to monitoring backends

- Simplifying Troubleshooting: Correlation tracking across requests

- Ensuring High Availability: Health checks and connectivity monitoring

Key Capabilities

Real-Time Metrics

- Counter Metrics: Track event occurrences (requests, errors, events)

- Gauge Metrics: Monitor current values (active connections, queue depth, memory usage)

- Histogram Metrics: Analyze distributions (response times, payload sizes)

- Custom Metrics: Define business-specific KPIs and measurements

- Metric Metadata: Tags, dimensions, and labels for filtering and grouping

Multiple Backend Support

- DataDog Provider: Push metrics and events to DataDog

- OpenTelemetry Provider: Export metrics using OpenTelemetry protocol (compatible with Prometheus, Jaeger, etc.)

- Console Provider: Development-friendly console output for testing

- In-Memory Provider: Testing and development scenarios

- Custom Providers: Extensible architecture for proprietary backends

- Provider Failover: Automatic backup provider selection

Note: Application Insights integration planned for future release. Current focus is DataDog and OpenTelemetry-compatible backends.

Tenant-Aware Monitoring

- Tenant Tagging: Tag metrics with tenant identifiers for filtering and segmentation

- Monitoring Attributes: Use

[MonitorTenant]attribute to automatically include tenant context - Per-Tenant Analysis: Filter and analyze metrics by tenant in your monitoring backend

Important: This component tags metrics with tenant context. Actual isolation, thresholds, and alerting are configured in your monitoring backend (DataDog, Grafana, etc.).

Distributed Tracing

- Correlation IDs: Track requests across service boundaries

- Span Management: Detailed timing for operations

- Dependency Tracking: Map service dependencies automatically

- Performance Analysis: Identify bottlenecks in distributed workflows

- Trace Visualization: Integration with APM dashboards

Health Check Integration

- Provider Connectivity: Monitor monitoring backend availability

- Application Health: Report overall application health status

- Dependency Health: Track database, cache, and API availability

- Custom Health Checks: Define business-specific health indicators

- ASP.NET Core Integration: Built-in health check endpoints

Integration Points

ASP.NET Core

// Startup configuration

builder.Services.AddRiptideMonitoring(options =>

{

// DataDog for production

options.UseDataDog(config =>

{

config.ApiKey = builder.Configuration["DataDog:ApiKey"];

config.ServiceName = "myapp";

config.Environment = builder.Environment.EnvironmentName;

});

// OpenTelemetry for Prometheus, Jaeger, etc.

options.UseOpenTelemetry(config =>

{

config.Endpoint = "http://localhost:4317";

config.Protocol = OtlpProtocol.Grpc;

});

// Enable distributed tracing

options.EnableDistributedTracing = true;

});

// Middleware

app.UseRiptideMonitoring();

Dependency Injection

public class OrderService

{

private readonly IMonitoringRepository _monitoring;

public OrderService(IMonitoringRepository monitoring)

{

_monitoring = monitoring;

}

public async Task ProcessOrder(Order order)

{

// Increment counter

await _monitoring.IncrementCounterAsync("orders.processed", 1);

// Record gauge

await _monitoring.RecordGaugeAsync("orders.value", order.TotalAmount);

// Track timing

using (var timer = _monitoring.StartTimer("orders.processing_time"))

{

await ProcessOrderInternalAsync(order);

}

}

}

Manual Metric Submission

// Submit custom metrics

await _monitoring.SubmitMetricAsync(new MetricValue

{

Name = "business.revenue",

Value = 1250.00m,

Type = MetricType.Gauge,

Timestamp = DateTime.UtcNow,

Tags = new Dictionary<string, string>

{

{ "tenant", "acme-corp" },

{ "region", "us-east" },

{ "plan", "enterprise" }

}

});

Common Use Cases

Application Performance Monitoring (APM)

Monitor request rates, response times, error rates, and throughput across all services. Identify performance degradation before users are impacted. Set up alerts for slow requests or elevated error rates.

Business KPI Tracking

Track business metrics like revenue, conversion rates, active users, and feature adoption. Create real-time dashboards for stakeholders. Correlate business metrics with technical performance.

Capacity Planning

Monitor resource utilization (CPU, memory, disk, network) to plan infrastructure scaling. Track growth trends and predict capacity needs. Identify optimization opportunities.

SLA Monitoring

Measure and report on service level agreements. Track uptime, availability, and performance against SLA thresholds. Generate compliance reports for customers.

Incident Detection and Response

Detect anomalies and trigger alerts for critical issues. Correlate metrics across services during incidents. Track mean time to detection (MTTD) and mean time to resolution (MTTR).

Multi-Tenant Analytics

Provide per-tenant usage analytics and billing data. Identify high-value tenants and usage patterns. Monitor tenant health and satisfaction metrics.

Technical Specifications

Supported Providers

- DataDog:

DataDogMonitoringProvider- push metrics to DataDog API - OpenTelemetry:

OpenTelemetryMonitoringProvider- export to OTLP-compatible backends (Prometheus, Jaeger, etc.) - Console:

ConsoleMonitoringProvider- development and testing - In-Memory:

InMemoryMonitoringProvider- unit testing - Custom: Implement

IMonitoringProviderinterface

Metric Types

- Counter: Monotonically increasing value (requests, events)

- Gauge: Point-in-time value (memory, connections, queue depth)

- Histogram: Distribution of values (latencies, sizes)

Note: Rate and Summary metrics are computed by backend systems (DataDog, Prometheus). This SDK focuses on raw metric submission.

Performance

- Async Operations: Metric submission paths are asynchronous to reduce impact on request threads

- Buffer Management: In-memory buffers smooth short bursts and expose hooks for overflow handling

- Instrumentation Overhead: Focused on lightweight tagging and batching so hot paths stay responsive

- Throughput Targets: Designed to batch metrics efficiently; measure with your chosen backend and retention policies

Performance Caveat: Network conditions, backend rate limits, and exporter settings ultimately determine sustained throughput. Benchmark under production-like load before adopting strict SLOs.

Why Riptide Monitoring Component

Business Value

- Reduce Downtime: Proactive monitoring helps spot issues earlier

- Faster Troubleshooting: Distributed tracing accelerates root-cause analysis and shortens MTTR

- Data-Driven Decisions: Business metrics inform strategic planning

- Improved Customer Satisfaction: Detect and resolve issues before users notice

- Compliance Support: Structured metrics and logs feed SLA and audit reporting pipelines

Technical Excellence

- Clean Architecture: Clear separation of concerns

- Provider Agnostic: Avoid vendor lock-in

- Well Tested: Extensive automated coverage across domains and adapters

- Type Safety: Strong typing with validation

- Extensible: Add custom metrics and providers

Enterprise Ready

- Multi-Tenant Native: Tenant isolation built-in

- High Performance: Minimal application overhead

- Production Proven: Battle-tested in high-traffic systems

- Comprehensive Documentation: XML docs and examples

- Enterprise Support: Professional support available

Configuration Options

DataDog Provider

options.UseDataDog(config =>

{

config.ApiKey = "your-api-key";

config.ApplicationKey = "your-app-key";

config.ServiceName = "myapp";

config.Environment = "production";

config.HostName = Environment.MachineName;

config.Tags = new[] { "team:platform", "component:api" };

config.BatchSize = 100;

config.FlushInterval = TimeSpan.FromSeconds(10);

config.EnableTracing = true;

config.EnableProfiling = true;

});

OpenTelemetry Provider

options.UseOpenTelemetry(config =>

{

config.Endpoint = "http://localhost:4317"; // OTLP endpoint

config.Protocol = OtlpProtocol.Grpc; // or OtlpProtocol.Http

config.Headers = new Dictionary<string, string>

{

{ "api-key", "your-api-key" }

};

config.BatchSize = 100;

config.FlushInterval = TimeSpan.FromSeconds(10);

config.DefaultTags = new Dictionary<string, string>

{

{ "service", "myapp" },

{ "environment", "production" }

};

});

Note: OpenTelemetry exports to Prometheus, Jaeger, Grafana, and other OTLP-compatible backends.

Application Insights Provider

options.UseApplicationInsights(config =>

{

config.InstrumentationKey = "your-instrumentation-key";

config.ConnectionString = "your-connection-string";

config.CloudRoleName = "myapp";

config.CloudRoleInstance = Environment.MachineName;

config.EnableAdaptiveSampling = true;

config.SamplingRate = 0.1; // 10%

config.EnableDependencyTracking = true;

config.EnablePerformanceCounters = true;

});

Distributed Tracing

options.EnableDistributedTracing = true;

options.TracingOptions = new TracingOptions

{

ServiceName = "myapp",

ServiceVersion = "1.0.0",

SamplingRate = 0.1, // Sample 10% of traces

PropagationFormat = TracePropagationFormat.W3C,

EnableSqlTracking = true,

EnableHttpTracking = true,

MaxSpansPerTrace = 1000

};

Best Practices

Do's

- ✅ Use meaningful metric names (dot notation:

orders.processed,users.active) - ✅ Add relevant tags for filtering (tenant, region, environment)

- ✅ Set appropriate alert thresholds based on baselines

- ✅ Use distributed tracing for multi-service requests

- ✅ Monitor both technical and business metrics

Don'ts

- ❌ Don't submit high-cardinality metrics (unique IDs in tags)

- ❌ Don't block on metric submission (always async)

- ❌ Don't ignore metric submission failures

- ❌ Don't submit metrics in tight loops without batching

- ❌ Don't expose sensitive data in metric names or tags

Dashboard Best Practices

Key Metrics to Monitor

- Golden Signals: Latency, Traffic, Errors, Saturation

- RED Metrics: Rate, Errors, Duration

- USE Metrics: Utilization, Saturation, Errors

- Business Metrics: Revenue, conversions, user activity

- SLA Metrics: Uptime, availability, performance

Alert Configuration

- Set alerts based on statistical baselines (not arbitrary thresholds)

- Use composite alerts (multiple conditions)

- Implement escalation policies

- Avoid alert fatigue (tune sensitivity)

- Document alert runbooks

Troubleshooting

Metrics Not Appearing

- Verify provider is configured correctly

- Check provider connectivity and authentication

- Ensure metric names follow provider conventions

- Review batching and flush interval settings

- Check provider health check status

High Overhead

- Reduce metric submission frequency

- Enable batching with appropriate batch size

- Use sampling for high-volume metrics

- Disable tracing for high-traffic endpoints

- Review metric cardinality (avoid unique IDs in tags)

Missing Tenant Context

- Verify tenant resolution middleware is registered

- Ensure tenant context is available at metric submission

- Check tenant tag is included in metric metadata

- Review tenant isolation configuration

- Test with explicit tenant context

Security Considerations

Metric Data Protection

- Don't include PII in metric names or tags

- Use tenant isolation for multi-tenant data

- Encrypt metrics in transit (HTTPS, TLS)

- Control access to monitoring dashboards

- Audit metric access and queries

Provider Security

- Use secure credential storage (Key Vault, Strongbox)

- Rotate API keys regularly

- Limit provider permissions to minimum required

- Monitor for unauthorized access

- Use network security groups to restrict access

Migration Guide

From Application Insights

If migrating from Azure Application Insights:

- Install Riptide.Platform.Monitoring.Bootstrap

- Keep existing Application Insights configuration initially

- Add Riptide monitoring alongside Application Insights

- Gradually migrate custom metrics to Riptide

- Update dashboards to use Riptide metrics

- Remove direct Application Insights dependencies

From Prometheus

If migrating from Prometheus:

- Install Riptide.Platform.Monitoring.Bootstrap

- Configure OpenTelemetry provider to export to your Prometheus instance

- Update metric names to Riptide conventions

- Migrate custom exporters to Riptide providers

- Update alerting rules

- Test metric compatibility

Note: Use the OpenTelemetry provider to send metrics to Prometheus. Prometheus natively supports OTLP ingestion.

Support & Resources

- API Reference: Monitoring API Documentation

- User Guide: Monitoring Guide

- Sample Application: Basic Web API Sample

- Specifications: Monitoring Specification

Riptide Monitoring Component - Comprehensive observability for modern applications.

SDK Persistence Component

Overview

The Riptide SDK Persistence Component is an enterprise-grade data access framework for .NET 8.0 applications that provides consistent database operations, automatic tenant isolation, connection management, and transaction coordination across multiple database providers. It enables development teams to build data-driven applications with confidence while maintaining security, performance, and operational efficiency.

Purpose

Modern applications require sophisticated data persistence that supports multiple database providers, enforces tenant isolation, manages connections efficiently, and provides reliable transaction handling. Traditional data access approaches often result in inconsistent implementations, security vulnerabilities, connection leaks, and complex multi-database scenarios. Riptide Persistence solves this by:

- Abstracting Database Providers: Support SQL Server, PostgreSQL, and other databases through unified interface

- Enforcing Tenant Isolation: Automatic tenant filtering at the data access layer

- Managing Connections: Connection pooling, health monitoring, and automatic retry

- Coordinating Transactions: Distributed transaction support across multiple databases

- Optimizing Performance: Query caching, bulk operations, and connection efficiency

- Simplifying Development: Repository patterns and query builders

- Ensuring Reliability: Automatic retry policies and circuit breaker patterns

Key Capabilities

Multi-Database Support